Utility WAN Architecture for AI Workloads

By Kenneth Rabedeau, DMTS, Head of Energy Segment, Nokia

By Kenneth Rabedeau, DMTS, Head of Energy Segment, Nokia

Utility WAN architecture determines whether AI inference, edge compute, and substation control traffic maintain deterministic latency under exponential bandwidth growth, optical transport scaling, and secure OT segmentation constraints.

AI is not simply increasing bandwidth demand across utility networks. It is redefining the tolerance envelope within which grid control remains trustworthy. When inference engines, distributed analytics, and high resolution telemetry converge on substations and regional cores, the WAN becomes a control dependency rather than a communications utility.

Operators do not experience WAN saturation as an inconvenience. They experience it as distorted situational awareness. If congestion arises during feeder switching or when DER volatility is high, topology views and state estimation outputs lag physical conditions. That latency gap is where misoperation risk begins.

The engineering decision is singular. Should the WAN continue as a best effort transport, upgraded incrementally, or should it be architected as deterministic infrastructure capable of sustaining AI workloads without eroding OT control confidence?

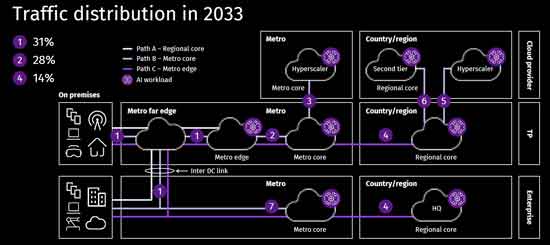

AI changes traffic shape. Training centralizes traffic in large facilities. Inference distributes traffic toward metro edges and substations. What were once periodic supervisory exchanges now become continuous model queries, waveform transfers, and DER performance streams.

During storm restoration or high load volatility, telemetry volumes can increase several multiples above baseline. If the WAN is provisioned only for average utilization, congestion occurs at the exact moment when switching authority must be precise. Latency rises. Jitter increases. Time synchronization drifts. The protection coordination logic executes on a stale topology and may isolate healthy feeder sections.

Optical transport scaling, therefore, becomes foundational. Modular coherent optics that scale from hundreds of gigabits toward multi-terabit capacity allow utilities to expand bandwidth without rebuilding topology. The tradeoff is clear. Underinvest and deterministic performance collapses under peak load. Overinvest and capital recovery models tighten. There is no neutral option.

Think you know Grid Data Foundations & AI Infrastructure? Take our quick, interactive quiz and test your knowledge in minutes.

Latency discipline is not defined solely by speed. It is defined by a bounded delay within application tolerance. Edge inference supporting feeder automation may require a consistent response within 10 to 20 milliseconds. Many legacy WAN architectures still operate in ranges exceeding 50 milliseconds under congestion.

That threshold difference is operationally significant. When inference results arrive outside the control window, automation transitions from deterministic to probabilistic behavior. A single delay spike during feeder reconfiguration can propagate through state recalculation, trigger unnecessary sectionalizing, and extend outage duration.

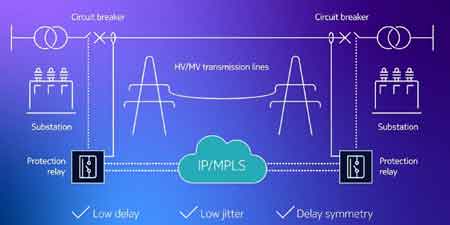

Supervisory timing integrity intersects directly with security posture. Compromised timing or manipulated transport latency can degrade control visibility. This is why deterministic transport design must align with SCADA Cybersecurity, where supervisory channel protection and control plane integrity are inseparable from performance discipline.

Below tolerance, the system stabilizes. Above tolerance, switching authority degrades. That boundary defines whether AI enhances reliability or undermines it.

AI inference is moving closer to substations to reduce transport delay and WAN backhaul strain. Local inference shortens response loops but expands architectural complexity. Each edge node becomes a lifecycle domain with firmware governance, model distribution cadence, and synchronization requirements.

In feeders with high inverter penetration, rapid impedance shifts and oscillatory conditions introduce additional uncertainty. Secure coordination of distributed assets must align with DER Cybersecurity, because DER rich environments expand east west traffic and segmentation requirements across the WAN.

An operational edge case emerges when partial WAN segmentation isolates an edge inference node. If model updates are delayed beyond acceptable thresholds, stale weights can produce false positives that trigger unnecessary isolation, or false negatives that allow instability to propagate. The WAN must therefore support synchronized model propagation and deterministic timing guarantees across distributed compute locations.

The unresolved decision boundary remains. How much inference belongs at the edge versus the regional core? Localize compute and reduce latency but increase distributed governance, or centralize compute and risk latency volatility during peak load. Architecture must reflect feeder criticality and DER density, not abstract preference.

Legacy microwave and leased circuits designed for low-bandwidth supervisory traffic cannot sustain the growth of AI-driven telemetry. Substation backhaul must evolve into scalable optical transport capable of deterministic performance under load.

Security cannot be layered after scaling. As AI workloads increase, east-west traffic between substations, regional cores, and enterprise systems becomes structural. Cybersecurity for Utilities establishes the requirement for layered protection that extends into transport design itself. Without strict OT segmentation, the WAN becomes a lateral movement channel that compromises both performance and security domains.

A utility WAN architecture must integrate encrypted transport, strict QoS enforcement, time synchronization discipline, and segmented OT domains while scaling bandwidth. Availability targets approaching six nines reduce downtime from hours to seconds annually. That quantitative difference alters restoration modeling assumptions and elevates WAN reliability into the protection layer itself.

The decision gravity is explicit. If AI becomes integral to switching logic, restoration sequencing, and predictive control, the WAN is no longer infrastructure supporting protection. It becomes part of the protection system. A WAN that cannot guarantee bounded latency, scalable optical headroom, synchronized edge inference, and hardened OT segmentation will eventually erode operator trust. Once trust in telemetry integrity erodes, automation authority collapses.

Download our FREE Electrical Training Catalog and explore a full range of expert-led electrical training courses.

Advantages To Instructor-Led Training – Instructor-Led Course, Customized Training, Multiple Locations, Economical, CEU Credits, Course Discounts.

Request For QuotationWhether you would prefer Live Online or In-Person instruction, our electrical training courses can be tailored to meet your company's specific requirements and delivered to your employees in one location or at various locations.