Enterprise AI Governance for Utilities

By Saul Melara, Director of Global Sector Architecture, Oracle Utilities

By Saul Melara, Director of Global Sector Architecture, Oracle Utilities

Enterprise AI Governance for Utilities establishes model lifecycle controls, OT boundary enforcement, and data governance to prevent model drift, uncontrolled inference, and telemetry distortion that degrade grid reliability and regulatory compliance.

Enterprise AI Governance for Utilities determines whether predictive models strengthen grid control or quietly degrade it. In modern distribution environments, inference engines now influence load forecasting, DER detection, anomaly billing, and dispatch optimization. When model lifecycle discipline is weak, drift becomes invisible until switching errors, voltage instability, or misclassified demand signals surface in operations.

Integrated AI platforms can process hundreds of millions of interval records monthly. In the referenced deployment, monthly datasets exceeded one billion interval records across more than 450,000 meters. At that scale, even a one percent inference degradation can misclassify thousands of EV or AC loads, distorting feeder forecasts and transformer loading assumptions.

The decision problem is not whether to deploy AI. It is whether enterprise governance can prevent uncontrolled inference from crossing the OT boundary and altering operational truth without validation.

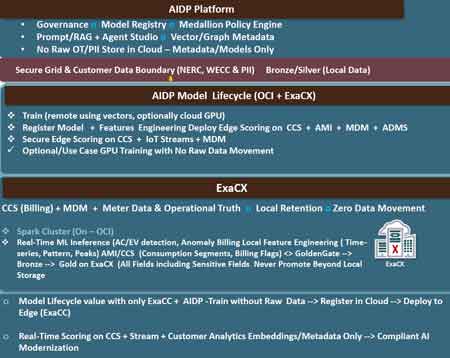

Enterprise AI Governance for Utilities begins with formal model registry control, feature lineage, and lifecycle enforcement. The presentation architecture illustrates training in controlled environments using vector metadata while preventing the movement of raw OT data beyond secure local boundaries. This constraint limits exposure while preserving compliance with regional reliability standards.

Model drift occurs when seasonal load shifts, DER penetration growth, or behavioral change alter input distributions. For example, EV plateau detection thresholds tuned in winter may over classify summer overnight consumption when AC loads compress baselines. Without continuous performance monitoring, false positives propagate into planning models.

Governance requires a defined retraining cadence, threshold recalibration discipline, and rollback capability. A model that detects 5,000 EV meters in one quarter must demonstrate stable precision across seasonal transitions before its outputs influence transformer upgrade decisions.

This is a deployment tradeoff. Aggressive retraining improves adaptability but increases validation overhead and audit complexity. Conservative retraining reduces operational noise but increases drift risk. Governance defines the acceptable balance.

The underlying data architecture follows a medallion discipline, moving raw operational telemetry through structured bronze, silver, and gold layers before model training occurs. Bronze layers retain immutable ingestion records, silver layers enforce validation and normalization, and gold layers contain feature ready datasets used for scoring and retraining.

Governance must ensure that model inputs originate from controlled gold level transformations rather than raw telemetry snapshots, or drift detection becomes structurally unreliable.

Enterprise governance separates training, metadata management, and inference deployment across defined control zones. The architecture in the presentation enforces local retention for sensitive billing and operational data, while registering models centrally and deploying scoring engines at the edge.

This prevents raw OT or PII data from migrating into uncontrolled environments. It also ensures that edge inference on CCS, MDM, ADMS, and streaming telemetry operates within secured boundaries.

Without this separation, an updated forecasting model could propagate directly into dispatch logic, altering switching priorities based on unvalidated load assumptions. A cascading operational consequence emerges if DER over generation is under predicted and voltage regulators respond late, amplifying instability during peak EV charging windows.

Governance, therefore, functions as a containment layer, not a compliance checkbox.

Telemetry distortion is the most underestimated AI governance failure mode. If anomaly billing models misinterpret transmission gaps as legitimate consumption reduction, feeder load curves flatten artificially. Planning systems then underestimate transformer stress.

The architecture integrates streaming ingestion, feature engineering, and inference scoring across ADMS and MDM layers. Enterprise control must verify that each inference is traceable to versioned features and validated datasets.

In environments that integrate cybersecurity for utilities and SCADA cybersecurity, AI outputs must inherit the same segmentation and authentication discipline as SCADA telemetry. Model inference is now part of the control signal chain.

An operational edge case arises when DER detection models trained on historical baselines fail during wildfire related load curtailment. If load suppression is misclassified as DER export, dispatch planning may overestimate available supply.

Governance must require stress testing against abnormal system states, not only nominal performance.

Enterprise governance aligns AI data platforms with existing control frameworks such as utility wan architecture and autonomous utility networks. Data ingestion, streaming, and scoring cannot introduce latency or bandwidth congestion that interferes with deterministic operations.

For example, short horizon forecasting that processes 7 day forward predictions must not compete with real time SCADA polling intervals. If inference workloads saturate compute resources during fault storms, control room visibility degrades precisely when stability margins narrow.

Integrated model orchestration must therefore coordinate with integrated ai driven driven solutions frameworks to ensure lifecycle transparency across billing, customer engagement, and grid operations domains.

This alignment introduces a governance constraint. AI cannot be evaluated solely on accuracy metrics. It must be evaluated on operational impact, latency tolerance, and failure isolation capability.

Stay informed with our FREE Grid Data Foundations & AI Infrastructure Newsletter — get the latest news, breakthrough technologies, and expert insights, delivered straight to your inbox.

Enterprise AI Governance for Utilities ultimately allocates accountability. If EV load forecasts drive capital planning, the governance board must define acceptable forecast variance thresholds and specify which executive function is responsible for error consequences.

A model that improves forecast accuracy by three percent but introduces a five minute validation delay during peak events may not be acceptable in certain feeder environments. Decision gravity increases when inference crosses from analytics into automated switching influence.

The enterprise risk question is direct. When model outputs shape dispatch, who certifies their reliability under abnormal conditions?

Governance frameworks must embed performance- monitoring dashboards, model version auditing, and operational override capabilities. They must define when inference is advisory and when it is authoritative.

Without that discipline, AI systems do not fail loudly. They erode state confidence gradually until an event exposes the gap between digital prediction and physical reality.

Enterprise AI Governance for Utilities prevents that erosion by enforcing lifecycle control, OT boundary containment, telemetry integrity validation, and quantified accountability before inference influences grid reliability.

Advantages To Instructor-Led Training – Instructor-Led Course, Customized Training, Multiple Locations, Economical, CEU Credits, Course Discounts.

Request For QuotationWhether you would prefer Live Online or In-Person instruction, our electrical training courses can be tailored to meet your company's specific requirements and delivered to your employees in one location or at various locations.