Nominal Voltage and AC and DC Standards

By William Conklin, Associate Editor

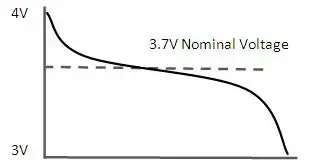

Nominal voltage is the standard voltage level assigned to electrical equipment or systems for identification. It helps ensure compatibility, performance, and safety across devices without indicating actual operating voltage, which may vary slightly.

Understanding the Role of Nominal Voltage in Power Systems

Nominal voltage (NV) is a vital aspect of electrical systems, serving as a reference point for designing, manufacturing, and testing equipment. Additionally, it affects the performance, efficiency, and safety of electrical systems, with voltage regulation ensuring that voltage levels (V) remain within acceptable tolerances. Understanding the differences between nominal voltage, operating voltage (OV), and rated voltage (RV) is crucial for professionals and enthusiasts to ensure the effective operation and maintenance of electrical systems. To better understand how nominal voltage fits within broader system design, see our comprehensive overview on voltage.

It is vital to understand the definition and importance of nominal voltage in electrical systems. NV refers to the standard voltage value assigned to an electrical system, typically the voltage that electrical equipment is designed to operate under normal conditions. This value is crucial because it serves as a reference point for designing, manufacturing, and testing electrical equipment to ensure satisfactory performance. Maintaining voltage stability is essential—learn how voltage drop impacts performance and how to accurately calculate it.

Determining nominal voltage for electrical systems involves considering factors such as the type of equipment being used, the overall power requirements, and the voltage standards set by industry organizations. In some cases, the NV is set by the equipment manufacturer, while in others, it depends on the power grid's Voltage levels. Standard nominal voltages have been established to streamline equipment design and manufacturing processes. Use our voltage drop calculator to determine how wiring distance and load affect nominal voltage in real applications.

The terms NV, OV, and RV often appear in discussions about electrical systems. While they may seem interchangeable, each has a distinct meaning. As previously mentioned, nominal voltage is the standard voltage value designated for an electrical system. On the other hand, OV is the actual voltage at which equipment functions during operation. Similar to nominal voltage, RV refers to the maximum voltage at which equipment can operate continuously without sustaining damage. See how voltage regulators are used to maintain nominal levels and protect sensitive equipment.

Nominal voltage has a direct impact on the performance of electrical systems. Equipment designed for specific NVs operates safely within a particular voltage range. Operating outside this range can result in equipment damage, decreased efficiency, and even electrical fires. Therefore, it is essential to maintain the voltage levels as close as possible to the NV. Our guide to what is voltage explains the differences between nominal, operating, and actual voltage in power systems.

Electrical systems worldwide adhere to common national voltage (NV) standards for alternating (AC) and direct (DC) systems. For AC systems, NV ranges include 110V, 220V, and 380V, while DC systems typically have NVs of 12V, 24V, or 48V. These standardized voltage levels enable the compatibility and interoperability of electrical equipment across various regions and industries. Learn how wattmeters and voltmeter tools help monitor nominal and real-time voltage values in field diagnostics.

Voltage regulation plays a critical role in controlling an electrical system's output voltage to ensure it remains within the acceptable voltage tolerance. Voltage regulation can be achieved through various means, including transformers, voltage regulators, and software algorithms in modern power systems. By maintaining NV, the electrical system The voltages more efficiently and safely, ensuring the longevity of the equipment.

The voltage tolerance is the range of voltage deviation from the nominal voltage value within which equipment can still operate effectively and safely. Different electrical systems have different voltage tolerances depending on the nature of the equipment and its intended use. For instance, a residential electrical system may have a voltage tolerance of +/- 5%, while an industrial system might tolerate a deviation of +/- 10%. Maintaining the acceptable V tolerance is crucial for the satisfactory operation of electrical equipment.

Nominal Voltage (NV) vs Operating Voltage (OV)

Nominal voltage and OV are two related yet distinct concepts in electrical engineering systems. Understanding the different essentials to ensure electrical systems' safe and efficient operation.

Nominal voltage refers to the standard or reference voltage level assigned to an electrical system, circuit, or device. It is the ideal voltage at which the equipment is designed to operate under normal conditions. NV is a baseline value that allows manufacturers, engineers, and technicians to design, test, and rate electrical equipment consistently.

Conversely, OV is the actual voltage level at which a particular electrical system, circuit, or device is functioning during real-time operation. Unlike nominal voltage, OV can fluctuate due to factors such as load changes, temperature variations, and power supply issues. Although electrical equipment is designed to operate optimally within a specified voltage range around the nominal voltage, slight variations in the operating V are often unavoidable.

In essence, NV serves as the benchmark or target voltage level, while OV reflects the real-world voltage conditions experienced by the electrical equipment. To ensure the efficient and safe operation of electrical systems, it is crucial to maintain the OV as close as possible to the NV. When the operating voltage falls within the acceptable voltage tolerance range, electrical equipment can function efficiently and safely without the risk of damage or performance degradation. Discover how alternating current systems rely on standardized nominal voltages like 120V or 240V for safe and consistent operation.

Nominal Voltage (NV) vs Rated Voltage (RV)

Nominal voltage and RV are related terms in electrical engineering but have distinct meanings and implications. Understanding the difference between the two is crucial for designing, operating, and maintaining electrical systems and devices properly. Differences between high voltage, medium voltage, and low voltage categories are based on standardized nominal values used in system classification.

It refers to the standard or reference voltage level assigned to an electrical system, circuit, or device. It is the ideal voltage at which the equipment is designed to operate under normal conditions. NV serves as a baseline value that enables manufacturers, engineers, and technicians to design, test, and rate electrical equipment consistently across various industries and applications.

On the other hand, RV is the maximum voltage at which an electrical device or component can operate continuously without incurring damage or experiencing a significant reduction in performance. RV is a critical parameter to ensure electrical equipment's safe and efficient functioning. Operating an electrical device within its RV range prevents excessive stress on the device, thereby reducing the risk of failure or shortened lifespan. Understanding the concept of electrical resistance is key to managing voltage drop and maintaining nominal voltage across circuits.

Nominal voltage is the target or reference Voltage level for the design and operation of electrical systems. In contrast, RV defines the maximum allowable Voltage for the continuous operation of electrical equipment. To ensure the efficient and safe performance of electrical systems, it is essential to maintain the operating voltage within the acceptable voltage tolerance range around the nominal voltage and not exceed the RV. The relationship between power factor and nominal voltage affects system efficiency, especially in industrial electrical networks.